经过数轮的行业洗牌以及电商生态的不断升级,数智化转型已然成为服装行业大势所趋。基于阿里云成熟的产品基础,知衣科技深窥用户需求,直击行业痛点,为服装企业提供了一系列的全链路数智化解决方案。

-

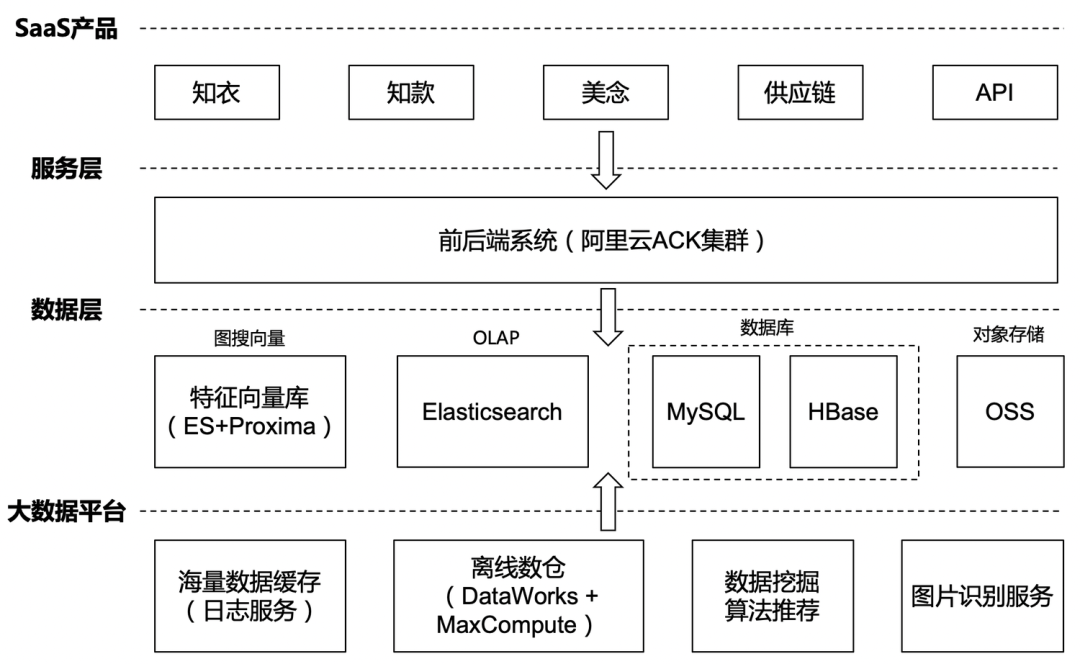

产品层:知衣目前有多款APP应用,如主打产品知衣、增强设计协作的美念等。除此之外,我们还提供定制化API向第三方开放数据接口服务和以图搜图的功能。从数字选款到大货成品交付的一站式服装供应链平台也是核心的能力输出。 -

服务层:相关产品的前后端系统都已经实现容器化,部署在阿里云的ACK容器服务集群 -

数据层:主要保存原始图片、业务系统产生的业务数据、以及OLAP数据分析服务

-

对象存储OSS:保存原始图片,构建服装行业十亿级别款式库 -

数据库MySQL:OLTP业务数据 -

HBase:以KV格式访问的数据,如商品详细信息、离线计算榜单等数据 -

特征向量库:由图片识别抽取的向量再经过清洗后保存在阿里达摩院开发的Proxima向量检索引擎库 -

ElasticSearch:用于点查及中小规模数据的指标统计计算。设计元素标签超过1000个,标签维度主要有品类、面料、纹理、工艺、辅料、风格、廓形、领型、颜色等

-

大数据平台

-

日志服务SLS:用于缓存经过图片识别后的海量向量数据。SLS还有一个基于SQL查询的告警能力,就是若向量数据没有进来会触发告警,这对于业务及时发现问题非常有用。 -

离线数仓(DataWorks + MaxCompute):通过DataWorks集成缓存了图片特征向量的日志服务作为数据源,然后创建数据开发任务对原始特征向量进行清洗(比如去重等)保存在MaxCompute,再通过DataWorks将MaxCompute清洗后的向量数据直接写入ElasticSearch的Proxima -

数据挖掘 & 算法推荐:部署在ACK里的一些Python任务,主要做推荐相关的内容,比如用户特征Embedding计算、基于用户行为的款式图片的推荐、相似性博主的推荐等 -

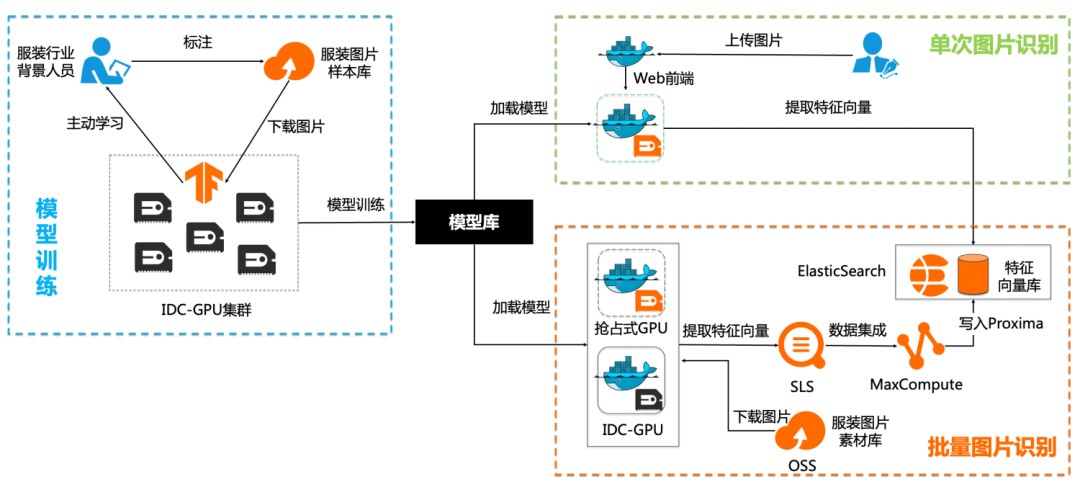

图片识别服务:目前图片识别服务主要还是部署在IDC机房,5~6台GPU服务器对图片进行批量识别

知衣的大数据方案也是经过不同的阶段不断的演进,满足我们在成本、效率和技术方面的追求,本质上还是服务于业务需求。

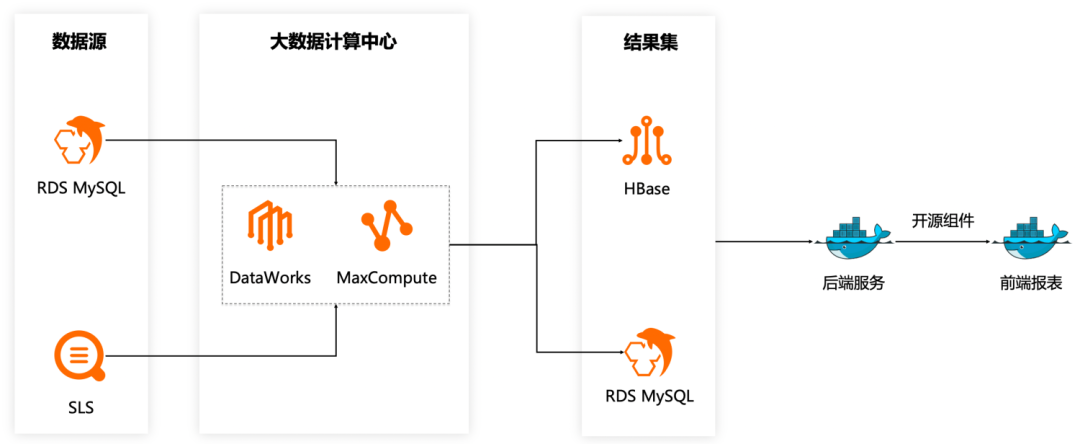

阶段一:IDC自建CDH集群

我们的业务系统一开始就部署在阿里云,同时在IDC机房部署了10台服务器搭建CDH集群,构建Hive数仓。计算过程是先将云上生产环境数据同步到CDH,在CDH集群进行计算后将计算结果再回传到阿里云上提供数据服务。

自建CDH集群虽然节省了计算费用,但是也带来不少问题。最主要的就是运维比较复杂,需要专业的人员进行集群的运维管理。出现问题也是在网上到处搜索排查原因,效率比较低。

阶段二:DataWorks + MaxCompute替换CDH集群

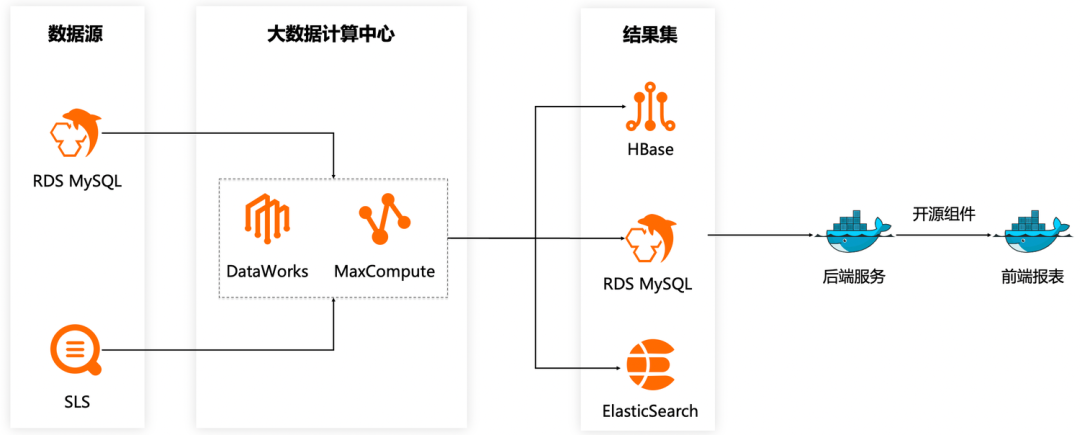

阶段三:ElasticSearch构建即席查询

知款聚焦于快速发现时尚趋势灵感,集成了社交平台、品牌秀场、零售及批发市场、淘系电商、时尚街拍五大图源,海量的设计灵感参考,帮助服装品牌及设计师快速准确地预判时尚风向,掌握市场动态。其中趋势分析板块就需要对某个季度下各种组合条件下的设计要素标签进行统计分析,并输出上升、下降以及饼图等指标。这也是我们数据量最大的查询场景,扫描分析的数据量量级会接近百万。

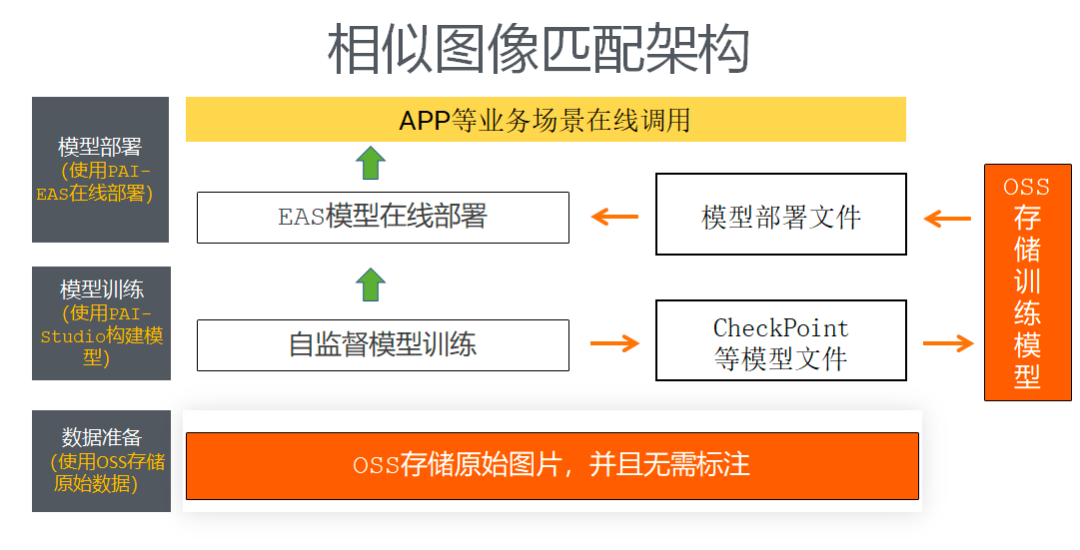

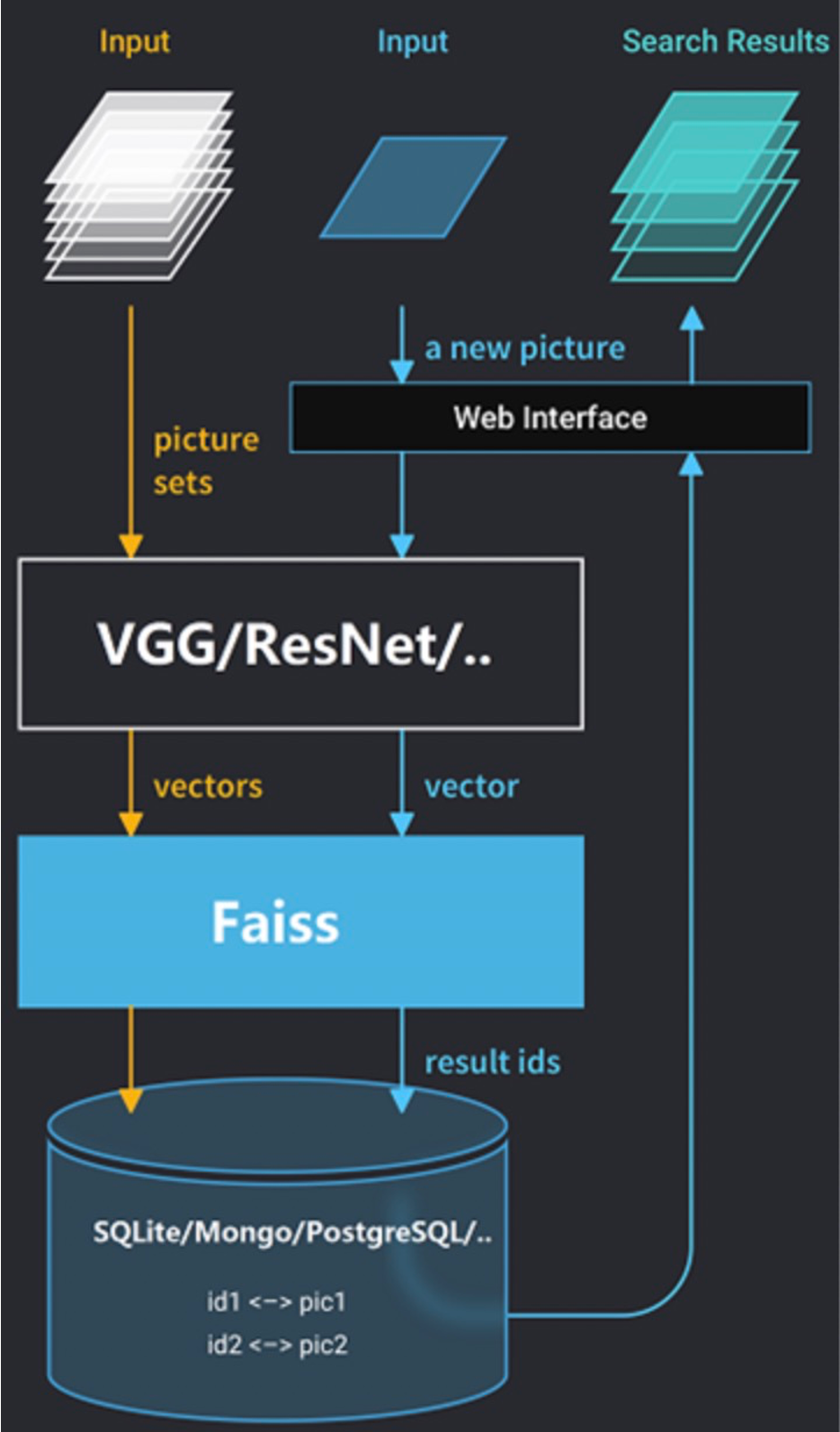

我们的核心功能场景是以图搜图,前提是需要对海量的图片库数据进行识别。我们以离线的方式对图片库的所有图片进行机器学习分析,将每一幅图抽象成高维(256维)特征向量,然后将所有特征借助Proxima构建成高效的向量索引。

批量图片识别

模型生成以后打包到Docker镜像,然后在GPU节点上运行容器服务就可以对海量的服装图片进行识别,提取出高维的特征向量。因为提取的特征向量数据量很大且需要进行清洗,我们选择将特征向量先缓存在阿里云日志服务SLS,然后通过DataWorks编排的数据开发任务同步SLS的特征向量并进行包含去重在内的清洗操作,最后写入向量检索引擎Proxima。

单次图片识别

Faiss

-

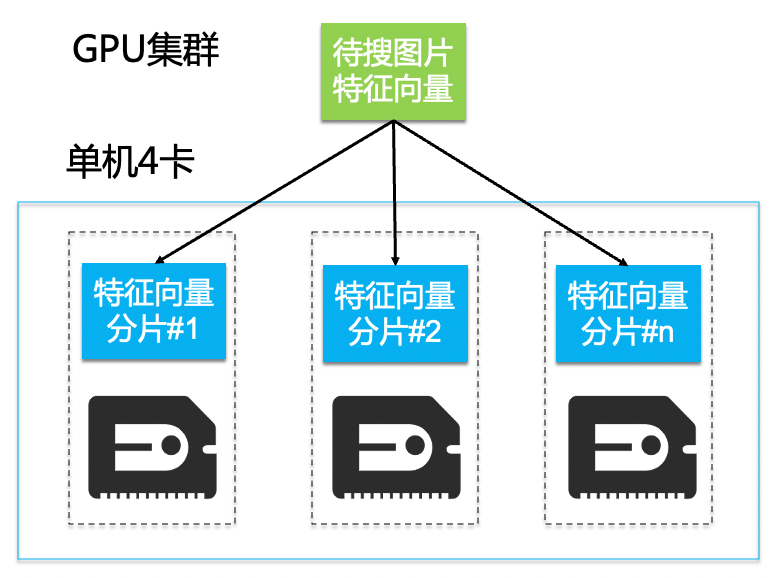

稳定性较差:分布式GPU集群有5~6台,当某一台机器挂了会拉长整个接口响应时间,业务的表现就是搜图服务等很久才有结果返回。 -

GPU资源不足:我们采用的是最基础的暴力匹配算法,2亿个256维特征向量需要全部加载到显存,对线下GPU资源压力很大。 -

运维成本高:特征库分片完全手动运维,管理比较繁琐。数据分片分布式部署在多个GPU节点,增量分片数据超过GPU显存,需要手动切片到新的GPU节点。 -

带宽争抢:图片识别服务和以图搜图服务都部署在线下机房,共享300Mb机房到阿里云的专线带宽,批量图片识别服务占用大带宽场景下会直接导致人机交互的图搜响应时间延长。 -

特定场景下召回结果集不足:因为特征库比较大,我们人工将特征库拆成20个分片部署在多台GPU服务器上,但由于Faiss限制每个分片只能返回1024召回结果集,不满足某些场景的业务需求。

Proxima

-

稳定性高:开箱即用的产品服务SLA由阿里云保障,多节点部署的高可用架构。到目前为止,极少碰到接口超时问题 -

算法优化:基于图的HNSW算法不需要GPU,且与Proxima集成做了工程优化,性能有很大的提升(1000万条数据召回只需要5毫秒)。目前业务发展特征向量已经增长到3亿。 -

运维成本低:分片基于ES引擎,数据量大的情况下直接扩容ElasticSearch计算节点就可以 -

无带宽争抢:以图搜图的服务直接部署在云上,不占用专线带宽,图搜场景下没有再出现超时查询告警 -

召回结果集满足业务需求:Proxima也是基于segment分片取Top N相似,聚合后再根据标签进行过滤。因为segment较多,能搜索到的数据量就比原先多很多。

OLAP分析场景优化迭代

规范数据建模和数据治理

图搜方案进阶合作